The KubeCon Europe 2026 conference confirmed what many platform engineers already suspected: Kubernetes is no longer just container orchestration infrastructure; it is becoming the control plane for AI workloads, sovereign cloud architectures, and enterprise-grade policy governance.

This was our first KubeCon, and we went in with a clear set of questions: how is AI changing what platform engineers do day-to-day, and which tooling decisions now will still look right in three years? Three topics kept surfacing: how the infrastructure layer is adapting to run AI workloads reliably, what governance needs to look like before agents are trusted with production decisions, and what Europe’s regulatory and sovereignty pressures are forcing platform architects to decide.

Here is what we think matters:

Key Takeaways

- Dynamic Resource Allocation (DRA) reached general availability in Kubernetes 1.34, with NVIDIA and Google donating GPU and TPU drivers to CNCF. Kubernetes is now the neutral AI infrastructure control plane

- Kyverno graduated to CNCF’s highest maturity level: policy-as-code at production scale is established, and AI governance is its next frontier

- Kubernetes Ingress has been officially archived. Gateway API v1.5 is the current standard for all networking, including LLM inference routing

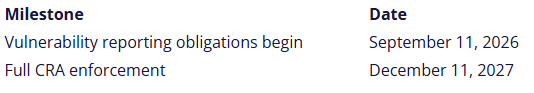

- The EU Cyber Resilience Act has firm deadlines: vulnerability reporting begins September 11, 2026; full enforcement hits December 11, 2027

- Digital sovereignty moved from conference theme to CNCF reference architecture

Kubernetes is now the AI control plane

The clearest signal from Amsterdam was not any single announcement; it was the cumulative weight of what NVIDIA and Google donated, and why they did it.

NVIDIA became a Platinum CNCF member and donated its GPU Dynamic Resource Allocation (DRA) driver to the foundation. Google followed with its TPU DRA driver. Donating infrastructure-level drivers to a neutral foundation is a deliberate architectural bet: these companies want Kubernetes to win the AI infrastructure layer, and they want the community to own the standard rather than any single vendor.

The conformance layer is also catching up. The Kubernetes AI Conformance Program has nearly doubled the number of certified platforms since its launch, rising from 18 to 31, including OVHcloud and SpectroCloud. It’s the closest thing the industry has to a trusted, neutral standard for AI infrastructure. Third-party validation is provided by a dedicated conformance bot, and later in 2026, the program will extend into Sovereign AI, with sandboxing and data privacy requirements included. That last part is worth noting specifically for regulated industries (finance, healthcare, public sector), where “AI-ready” needs to mean something auditable.

Agentic AI arrived, and governance is still catching up

The tooling moved fast, yet the authorization frameworks did not.

Kelsey Hightower put it plainly in his keynote: “Everyone is a junior engineer when it comes to AI.” Not a pessimistic take, just a useful one. Platform teams building AI infrastructure in 2026 are working at the frontier, not the established middle. At KubeAuto Day, he sharpened his statement: “teams rushing to put AI on everything would be better served renaming /etc/crontab to /etc/ai.” For platform engineers, AI adoption isn’t about generating code. It’s about knowing which operations are worth automating and building the infrastructure to do it reliably.

The most concrete pattern was MCP servers: agents connected directly to your tooling, handling specific operations without human intervention. Kubernetes MCP servers automatically resolve OOM-killed workloads. GitHub MCP servers audit manifests against security standards and enforce them in CI. The common thread is agents working inside your existing toolchain, not on top of it. Writing custom MCP servers is starting to look like the next core platform engineering skill, just as writing Kubernetes controllers already is.

HolmesGPT, now a CNCF Sandbox project, applies that pattern to incident response by correlating events and traces to diagnose infrastructure problems without manually running runbooks. It’s early days, but the signal is clear. Operational runbooks are becoming training data for autonomous agents, not documents that engineers read.

Kyverno graduated to CNCF’s highest maturity level, joining a small group of projects that have demonstrated enterprise-scale production adoption. Bloomberg, Coinbase, Spotify, Deutsche Telekom, and Vodafone are among the enterprise adopters on record. Kyverno now fully adopts Common Expression Language (CEL), aligning with Kubernetes’ admission control direction and enabling more expressive policy logic. The next version is already extending into AI and MCP gateway governance, bringing the same declarative policy model to AI agent authorization.

The practical implication is straightforward: invest in policy-as-code now. The agent frameworks will mature quickly. Teams that have governance guardrails already in place will extend them to AI workloads.

Will AI replace platform engineers?

No. And KubeCon made the case for it. The complexity introduced by AI workloads creates more platform engineering work, not less. Inference scheduling, model-serving infrastructure, AI traffic management, GPU resource governance, safety sandboxing: none of that runs itself. Someone must design the abstractions that make it manageable, enforce the policies that make it safe, and build the self-service layer that keeps developers out of the infrastructure weeds. That someone is a platform engineer.

Nana Janashia made this point directly at KubeAutoDay. AI is changing what platform engineers build, not eliminating the role. The teams feeling threatened are the ones waiting to see how the tooling settles. The teams pulling ahead are the ones treating the new primitives as an extension of work they already know: resource management, policy enforcement, and developer experience.

The MCP server pattern is a good example. Writing a custom MCP server that connects an AI agent to your infrastructure tooling is not a data science problem. It is a platform engineering problem. The same is true for GPU resource governance via DRA, inference traffic routing via the Gateway API, and agent authorization via Kyverno.

The skill set is expanding, not being replaced. Engineers who start building familiarity with these primitives now will be well-positioned as the tooling matures. Those who wait for a stable, well-documented path will find themselves a long way behind teams that were already building on it.

European digital sovereignty moved from theory to architecture

This was the European edition of KubeCon, and it showed. The sovereignty narrative shifted from “we should think about this” to “here’s our reference architecture and it’s in production.”

Swisscom presented the first sovereign Kubernetes architecture published as an official CNCF reference architecture. Built on KubeOne, KubeVirt, Kyverno, and ArgoCD, the architecture migrated 60% of Swisscom’s internal workloads within nine months. The explicit goal: architectural independence from US CLOUD Act jurisdiction over their data.

The case here is that sovereignty isn’t about rebuilding your infrastructure from scratch. It’s about retaining the freedom to change where you run, what you run on, and how it’s connected, without rewriting your applications. That’s achievable with open source tooling today.

The EU Cyber Resilience Act has a deadline, and it’s closer than you think

Greg Kroah-Hartman, lead maintainer of the Linux kernel, presented the EU Cyber Resilience Act (EU 2024/2847) timeline with firm dates:

If you ship software to EU customers, three action items sit between now and September:

- SBOM generation into your CI/CD pipeline. The CRA requires machine-readable Software Bills of Materials in SPDX or CycloneDX format, updated on every release and covering all top-level dependencies. The Dalec project (contributed by Microsoft at KubeCon) provides declarative package building with SBOM generation and provenance attestations baked in.

- Build a vulnerability disclosure process with a 24-hour SLA. From September 11, the CRA requires an early warning to ENISA within 24 hours of discovering an actively exploited vulnerability, a full notification within 72 hours, and user notification within 14 days of a patch being available. This needs a named owner, a triage process, and a registered account on ENISA’s Single Reporting Platform before that date.

- Audit your dependency chain. Dependency management best practices are not just good engineering hygiene anymore — under the CRA, they become compliance requirements for software operators in the EU.

September is five months away. Enough time to act, not enough time to wait for the next quarterly planning cycle.

Conclusion

One thing we didn’t anticipate from a conference this size: some of the most useful conversations happened at vendor booths. Walking in with real challenges from active projects, rather than general questions, opened genuinely technical discussions. Rather than product demos, we got into specifics: directions worth exploring, what hadn’t worked for similar teams, and concrete test approaches. If you’re going to KubeCon for the first time, treat those conversations as free consulting opportunities rather than something to skip.

KubeCon Amsterdam 2026 left us with three things platform teams should be acting on now.

- Kubernetes AI infrastructure is standardizing faster than most teams are moving. DRA is GA, NVIDIA and Google are contributing openly, and conformance certification is expanding quickly. Teams that haven’t assessed their cluster architecture for AI readiness are already behind reference implementations that are in production.

- The AI tools being built today in CNCF Sandbox (Kagent, HolmesGPT, the emerging inference stack) run on Kubernetes. They require exactly the kind of production-grade foundation that most teams haven’t finished building. That’s not a reason to deprioritize them. It’s a reason to get the foundation right while the AI layer matures.

- The EU Cyber Resilience Act is real, and the September 2026 deadline is closer than it feels. SBOM generation, vulnerability disclosure processes, and supply chain practices are not theoretical; they are compliance requirements for software operators in the EU.

As a Kubernetes Certified Service Provider, we work with platform teams navigating exactly these decisions, from AI infrastructure readiness to CRA compliance planning and governance architecture. If you’re working through any of these challenges, get in touch with our team and let’s talk through where your platform stands.

By Adrian Marcu, Sergiu Corban, and Robert Boseanu

STAY TUNED

Subscribe to our newsletter today and get regular updates on customer cases, blog posts, best practices and events.