The need for AI workshops

On the morning of a workshop, a developer described how he’d been working with AI. He had one chat window open all day, and he piled every task into the same conversation. By mid-afternoon, the model started drifting; it gave wrong answers, hallucinated function names, and created code that didn’t compile. The context window was full. The model didn’t have a bad day; it was like any other day: not good and overflowing with data.

That single moment captured why we ran these workshops in the first place.

How we ended up giving three workshops in the same week

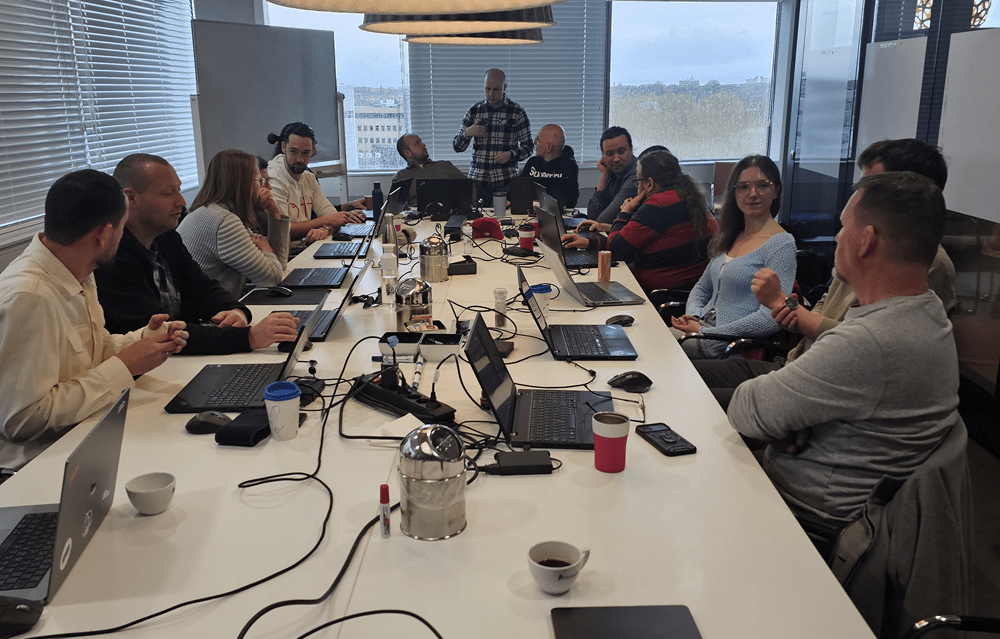

From March 25 to 27 March 2026, our team—Mihai Bob (Architect), Andrei Solomon (Tech Lead), and I—delivered three back-to-back AI Adoption Accelerator workshops for three companies in the TSS group: PinkRoccade Local Government (PRLG) and TANS in the Netherlands, and prohandel in Germany.

Each company approached us with a different starting point.

- PRLG‘s R&D team was already using Copilot for refactoring and development, but wanted to move from code completion toward structured task automation.

- The TANS team was preparing for a Delphi-to-.NET modernization and needed a cross-role audience — developers, testers, and PMs — to build shared AI fluency.

- The team at prohandel wanted to rewrite a Progress OpenEdge ERP system into .NET Core microservices and wanted to know what AI could do beyond the IDE (integrated development environment).

Different companies, different industries, different stacks, different tools, but all had the same underlying gap. For all three companies, AI adoption was uneven: a handful of people had sophisticated workflows, while most were still using AI in ad hoc ways, copying snippets into a chat window, with no instruction files or shared playbooks.

One framework, three flavors

As each company had different starting points, we didn’t run the same workshop three times. We ran three different workshops built on the same foundation.

The foundation was simple. Thirty percent knowledge sharing, seventy percent hands-on. Mihai kicked off with a session on AI at Work: Greenfield vs Brownfield, framing where AI actually delivers value in an enterprise codebase. Andrei followed with AI-Assisted Fundamentals, sharing his insights on prompting techniques, instruction files, and context management. Then we got out of the way.

The variation came in the tools. PRLG worked with Claude Code. TANS used GitHub Copilot. prohandel used Cursor. We deliberately built three tool-specific decks rather than mixing terminology. It cost us prep time, but watching participants scribble “playbook” or “instruction file” in the same vocabulary their tools actually use repaid the effort twice over.

We also asked each team to submit one or two real project topics in advance. No toy to-do lists as people learn faster when they’re solving a problem they actually own.

What the participants taught us

By the end of each day, every team presented its results. The pattern in their reflections was almost identical, and it surprised us.

“Asking AI to help you work better with AI will actually generate better prompts,” a TANS team member remarked. Or “correcting mistakes within the same context is a no-go” and “don’t get lost in prompt-file engineering, just start trying.” Nobody walked out saying they had learned to write better prompts. They walked out saying they had learned to think differently about working with the model.

Three themes surfaced at all three workshops:

- Context engineering matters more than prompt phrasing. Knowing which files to reference and when to reset a conversation will beat clever wording every time.

- Specs come before code. The teams that produced the best working software were the ones that spent the most time defining what they wanted before asking AI to build it. The better the specs upfront, the better the software will run in the end.

- Don’t argue with the model. If it makes a wrong decision, reset and re-prompt. Don’t waste fifteen minutes negotiating with it.

And the numbers backed it up. In the post-workshop survey: 4.6 out of 5 on relevance to daily work, an NPS of 53, zero detractors.

In a follow-up email, Rudy from TANS wrote:

“I think it was a great success. The theory presented by Mihai and Andrei was good, and they followed it up with useful interaction during the hands-on session. Today I discussed it with our developers, and they are now really eager to use the things they have learned.”

That’s the line that matters to us. It is nice that the workshop ended well, but it is great that Monday morning started differently.

Behind the scenes

I’ll be honest, I was nervous. I’m not used to presenting in front of an audience, and I spent the night before the first workshop convinced I would freeze during the kickoff. Mihai was nervous too, worried about being asked a question he couldn’t answer. I told him, “If you don’t know, just say it’s a great question and you’ll look it up. There’s no harm in that.” I told myself the same thing.

But it turned out that the hardest part wasn’t presenting, it was the preparation. We had to create three decks, three tools, and three tech stacks in the same week. And we had to keep the energy high during all three days. By day three, we were running on caffeine and adrenaline. And we concluded that two back-to-back workshops would have been optimal; three was a stretch.

We also got a few things wrong as one participant bluntly pointed out in the post-workshop survey: “The communication regarding the use cases was unclear.” They were right. At TANS, some expected us to hand them a topic to work on; yet we had asked them to bring their own, and looking back, we undercommunicated what “bring your own topic” actually meant. At prohandel, participants preferred that we model the workflow alongside them rather than hand it over right away. Andrei and Mihai worked side-by-side with the developers, and the energy in the room shifted as soon as we did.

The most rewarding surprise was watching how creative the participants were once the friction wore off. A few of them, when stuck, simply asked the AI itself how to write the next prompt, a great move that, frankly, none of us had taught them.

What we would do differently next time

There are two things we would change, and they are logistical:

- Set clear, concise expectations up front. A clearer pre-workshop brief about bring-your-own-topic would have saved us the on-the-fly adjustments.

- Bring more prepared examples. Participants specifically asked for cheat sheets and short demos in the feedback, and that is exactly what helps the “show me how” learners to find their footing before going independent.

The biggest takeaway, though, is one we didn’t expect. AI adoption is rarely about prompts, models, or tools. It’s about giving teams the vocabulary and the safe space to experiment together. Once they have that, they teach themselves.

If your team has the same gap, where some people are running structured AI workflows, while others are still figuring out where to start, then reach out, as we would love to talk to you.

By Sorin Checherita,

Project Manager @ Yonder

STAY TUNED

Subscribe to our newsletter today and get regular updates on customer cases, blog posts, best practices and events.